Full lifecycle model management

From model registry to production deployment with monitoring. Canary rollouts, blue-green swaps, drift detection, alerting, and auto-retraining — all built-in.

8 deployment strategies for every scenario

From canary rollouts to A/B testing. Deploy models safely with automatic rollback and traffic management.

Canary Deployment

Route a small percentage of traffic to the new model. Gradually increase as health metrics confirm stable behavior. Automatic rollback on degradation.

Blue-Green Swap

Run two identical environments side by side. Switch traffic instantly with zero downtime. Instant rollback by swapping back.

Shadow Deployment

New model receives production traffic in parallel without serving responses. Compare outputs against the active model risk-free.

A/B Testing

Split traffic between model variants with configurable ratios. Statistical significance testing and automatic winner declaration.

Progressive Rollout

Multi-stage rollout with health gates. Define traffic percentages and validation criteria for each stage before proceeding.

Staging to Production

Deploy to staging for validation. Promote to production after manual or automated approval with full audit trail.

Key Capabilities

Everything you need to get the most out of this module.

Model Registry

Version, stage, and track metadata for every model. Full lineage from data to deployment.

Deployment Strategies

Canary, blue-green, shadow, and A/B deployment strategies with automatic traffic management.

Monitoring & Drift

Statistical tests detect data and concept drift. Performance tracking with configurable alerts.

Auto-Retraining

Drift-triggered, scheduled, or performance-based retraining pipelines that keep models fresh.

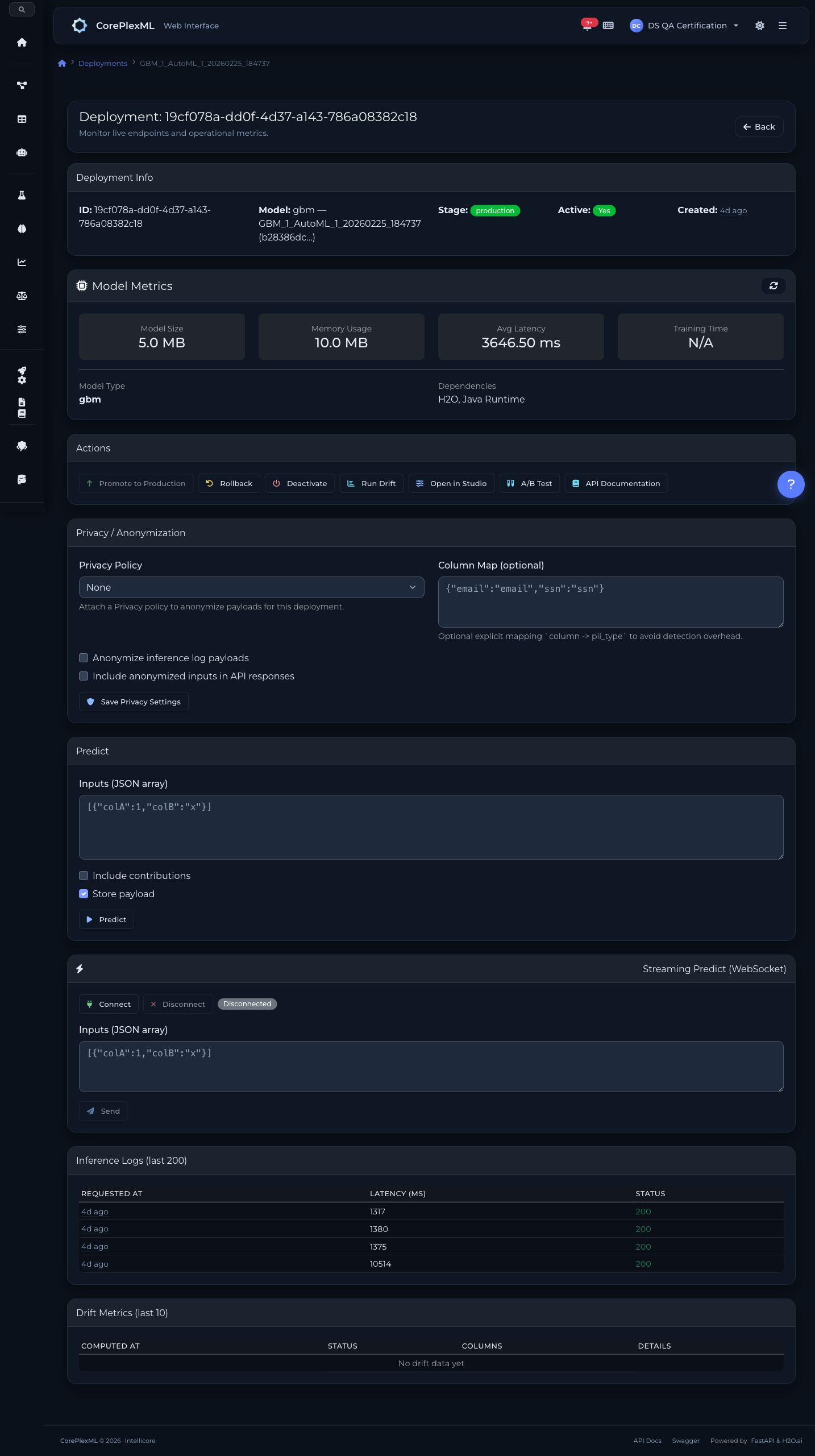

Detect issues before they impact production

Comprehensive monitoring with drift detection, latency tracking, and multi-channel alerting.

Drift Detection (PSI)

Population Stability Index tracks feature and prediction distribution shifts. Configurable thresholds trigger alerts or retraining.

Accuracy Tracking

Continuous performance monitoring against labeled data. Detect concept drift before it impacts business outcomes.

Latency Monitoring

Track p50, p95, and p99 inference latency. Alert on degradation to catch infrastructure issues early.

Inference Logging

Every prediction logged with input features, output, latency, and metadata. Full request-level audit for compliance.

Multi-Channel Alerts

Slack, Email, and Webhook channels. Configurable cooldown periods, severity levels (info, warning, critical), and escalation rules.

Auto-Retraining

Drift-triggered, schedule-based, or performance-based retraining. Automatic promotion when validation passes improvement thresholds.

Deploy and monitor with code

Full deployment lifecycle from the SDK — create, promote, predict, monitor drift, and configure auto-retraining.

from coreplexml import CorePlexMLClient

client = CorePlexMLClient(

base_url="https://api.coreplexml.io",

api_key="sk_your_api_key"

)

# Deploy model to staging

deployment = client.deployments.create(

project_id="proj_abc",

model_id="mod_best_xgb",

name="fraud-detector-v2",

stage="staging"

)

# Promote to production

client.deployments.promote(deployment["id"])

# Real-time predictions

result = client.deployments.predict(

deployment_id=deployment["id"],

inputs={"amount": 9500, "merchant": "electronics", "hour": 2}

)

print(f"Fraud probability: {result['probability']:.2%}")

# Check drift metrics

drift = client.deployments.drift(deployment["id"])

print(f"PSI: {drift['psi']:.4f}")

# Set up auto-retraining on drift

client.retraining.create_policy(

deployment_id=deployment["id"],

trigger="drift",

threshold=0.15,

auto_promote=True

)MLOps API

160+ endpoints for deployments, model registry, canary/blue-green/shadow strategies, A/B testing, monitoring, alerting, and auto-retraining.

/api/mlops/projects/{id}/deploymentsCreate deployment (staging or production)

/api/mlops/deployments/{id}/predictReal-time inference endpoint

/api/mlops/deployments/{id}/promotePromote staging to production

/api/mlops/deployments/{id}/driftDrift detection metrics (PSI, data drift, concept drift)

/api/mlops/deployments/{id}/canaryStart canary deployment with traffic stages and auto-rollback

/api/mlops/deployments/{id}/blue-greenCreate blue-green deployment with instant swap capability

/api/mlops/deployments/{id}/shadowStart shadow deployment for passive model validation

/api/mlops/ab-testsCreate A/B test between model variants

/api/mlops/ab-tests/{id}/resultsStatistical results with confidence intervals

/api/mlops/registry/versionsCreate model registry version with semantic versioning

/api/mlops/registry/versions/{id}/stageTransition version stage (dev → staging → prod → archived)

/api/mlops/retraining-policiesConfigure auto-retraining triggers (schedule, drift, perf)

/api/mlops/projects/{id}/alert-rulesCreate monitoring alert rules with severity and cooldown

/api/mlops/notification-channelsConfigure Slack, email, or webhook notification channels

/api/predictions/batchStart batch prediction job with CSV upload

/ws/predictions/{id}/streamWebSocket streaming for real-time batch inference progress

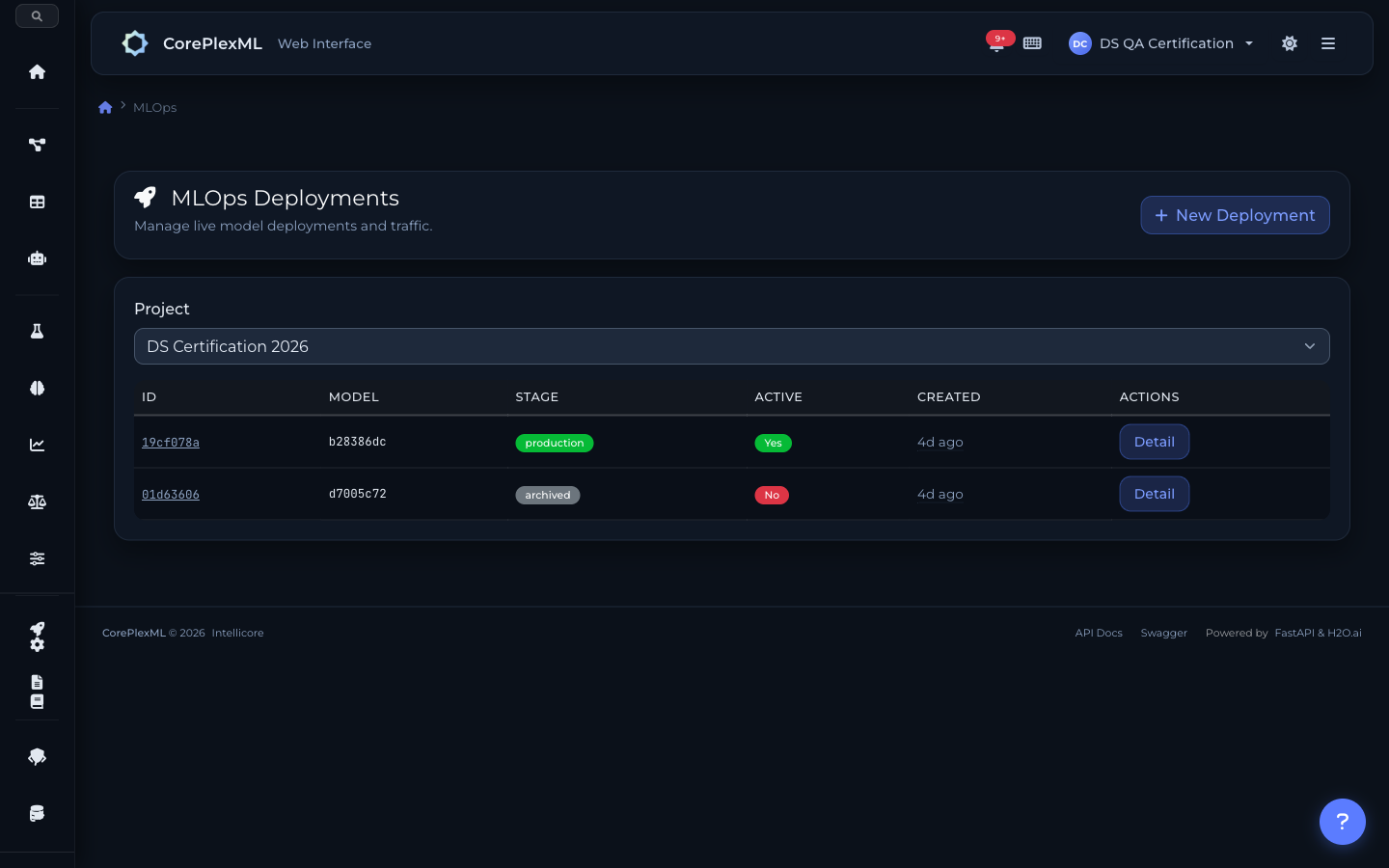

Deployments, monitoring, and analysis

Deployment list with staging and production stages

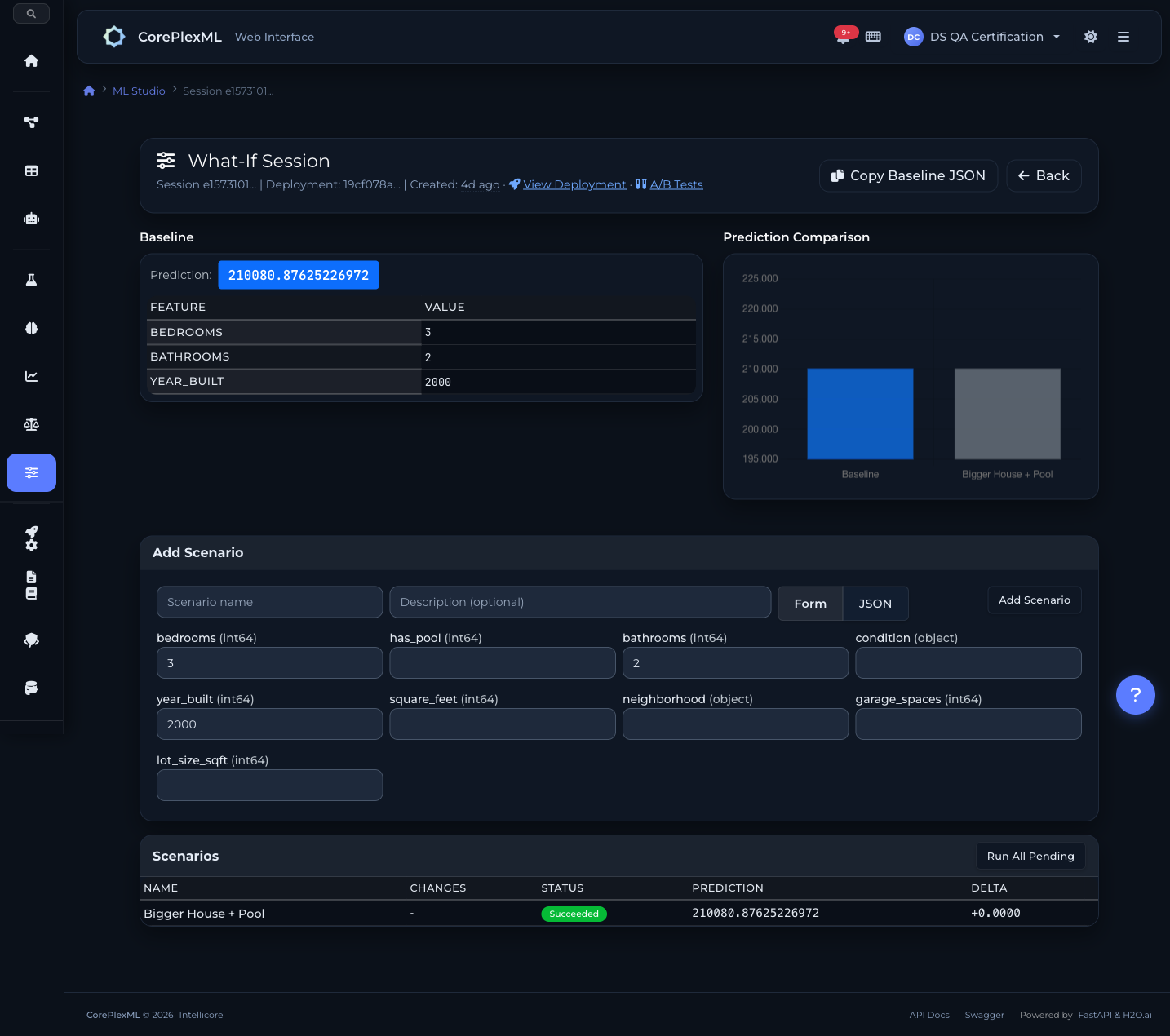

What-If Studio for scenario analysis

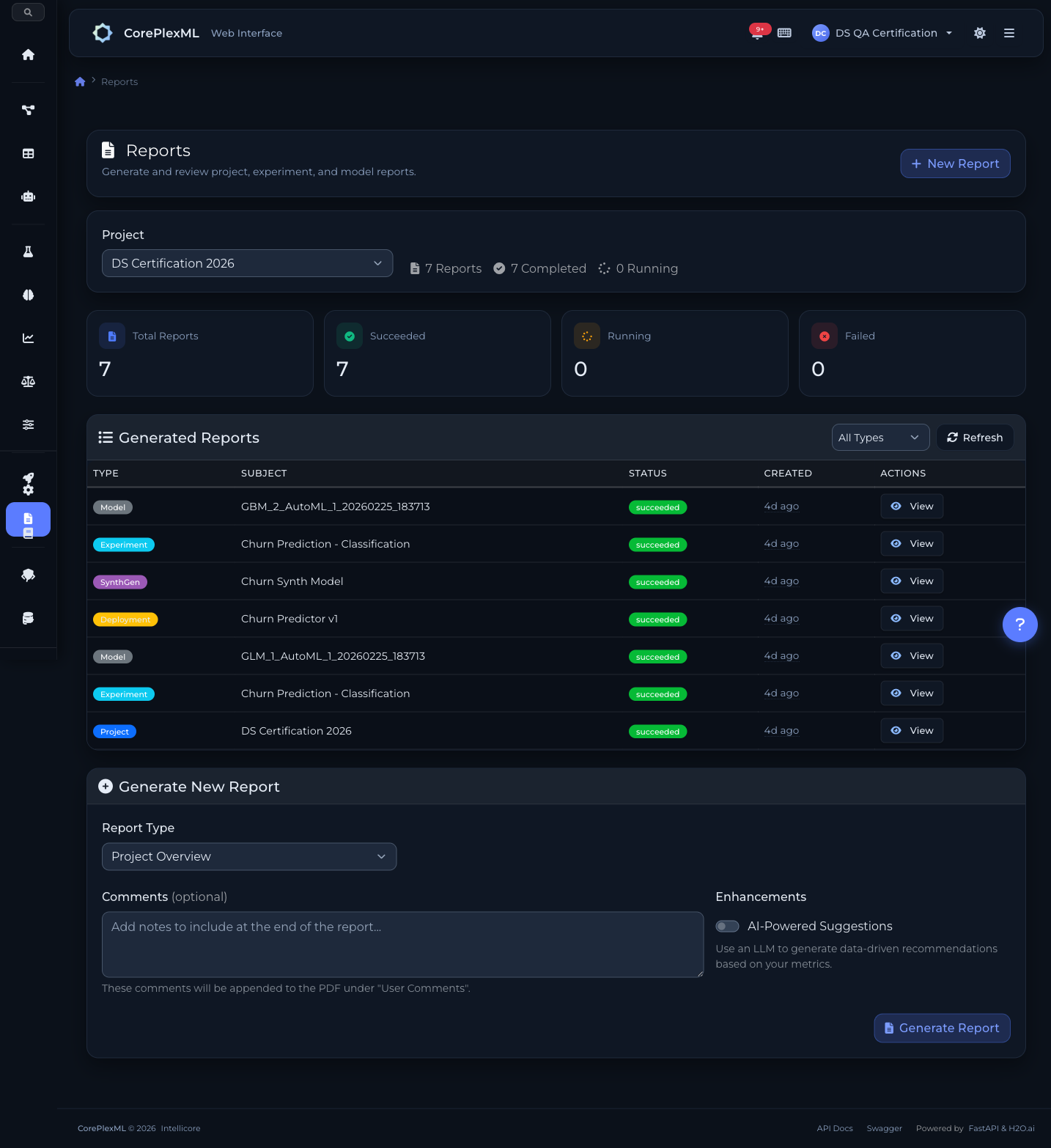

7 report types with one-click generation

Ready to get started?

Start building with CorePlexML today. Free tier available — no credit card required.